I admit that I often use this as a mental reminder, rather

than something to populate, as my preference is to speak to a developer on my

team immediately after a session to investigate or question. (I don’t raise bugs I describe behaviour and

in writing this, that’s probably what my next post will be on. I’ll add a link to it on here when done.) Only if this isn’t possible due to

availability will I actually fill things in from the notes I have taken during

sessions. For me, this is a disposable

document with a short shelf life used to capture, discuss, resolve (or not), and

most importantly discard.

I’ve reproduced the

template in bullet form rather than embed a PDF or word document, that way I

hope it will be easier for you guys to take away and make your own. When you get to the questions you might find,

as I do quite often, you will remove some before you start as not applicable,

or you won’t have filled some in when you’ve finished. It’s supposed to be flexible like that but

you should take a moment to understand why they are not populated or applicable

to the session as that may prompt some other thoughts.

The template:

- The Basics: Date; App/function under test (brief

description); any other useful information depending on your context

- Any dependencies vital to the testing

(connections, files, data, hardware etc. this helps make sure you have them

before you start)

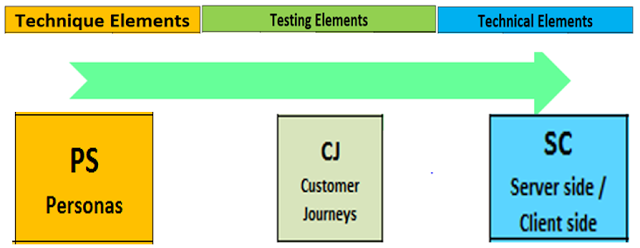

- Any information that is useful such as material/learning’s

from previous sessions, personas to use, environments, tools etc.

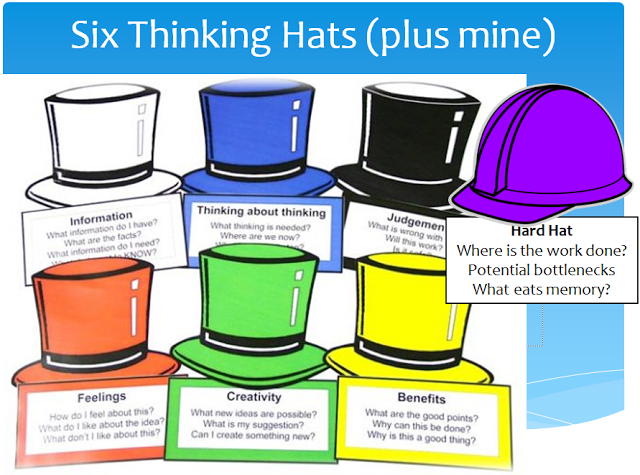

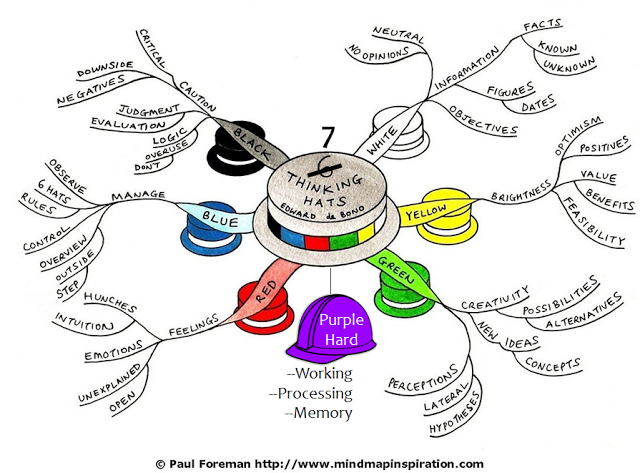

- Test strategy (a consideration of techniques you

might use as a flexible plan is often more useful than no plan, but don’t be

afraid to improvise as that’s half the fun and discovery may make your plan

obsolete quite quickly)

- *Metrics

(see rant at the end of this post)

- The questions: (with a brief reasoning for them)

o

What do I expect?

(even if it is something brand new I always have some expectations)

o

What do I assume? (sets a context that I can

query as I go)

o

Are there any risks I should be aware of? (to

execution, the system, helps anyone else reading have context)

o

What do I notice? (behaviour; usability)

o

What do I suspect? (things that I feel, not

always based on facts but that I don’t want to lose)

o

What am I puzzled by? (behaviour that doesn’t

feel right)

o

What am I afraid of? (high priority concerns

about the item under test)

o

What do I appreciate/like? (always good to have

some positive feedback)

·

Debrief (originally

between the tester and a manager there’s a checklist of questions on

satisfice/sbtm

. My version is more often a conversation with

the developer with questions or queries, but can also be with the product owner

or stakeholder depending on what I find/context. I’m not saying don’t do this,

rather do it only where it’s going to add value.

This post is getting a bit longer than I’d hoped but I feel

it’s important to summarise the benefits and possible drawbacks of using this

method so there’s a balanced view.

Pro’s

Con’s

Allows control to be added to an ‘uncontrolled process’

Can be harder to replicate findings as full details are

not captured

Makes exploratory testing auditable

As a ‘new’ technique it has to be learnt

Testing can start almost immediately

Recording exploratory testing (rather than brief notes) can break focus /

concentration if you're more worried about doing it

Makes exploratory testing measurable through metrics

gathered

Time is required to analyse and report on metrics

Flexible process that can be tailored to multiple

situations

Time is required to discuss/give feedback to potentially

the ‘wrong’ person

Biggest and most important issues often found first

Can help explain what testers do to clients, stakeholders

and the uninformed

Given all the above, if you have to justify exploratory

testing, (notwithstanding you should be looking for a new job!) then using

session based test management either in its original form or some hybrid

version could be the convincer you’re looking for. In the table above, ‘management’ will

generally only see the Pro’s column which covers a lot of the things ‘they’

will worry about. But seriously, look

for a new job!

*Metrics: I personally don’t think these are useful for virtually

anything (oh, more controversy!), but if you absolutely have to report back to

someone, a manager who knows little or nothing about what testing really is for

example, here are some metric examples.

How much time you’ve spent such as start/end times on actually executing

testing; blocked; recording findings; actionable insights; questions/queries;

potential problem; bugs; obstacles; screen shots or some other method of

recording or documents to show any issues to help replicate them.